-

Creative Talks #30: Dina Jashari

-

Creative campaign for Mental Health Awareness day

-

Fielmann glasses changes our world-view

-

This is your brain on…

-

Imagine an office without gender bias. Is it real?

-

How “Tube Girl” took over the world

-

When the copy is just so good…

-

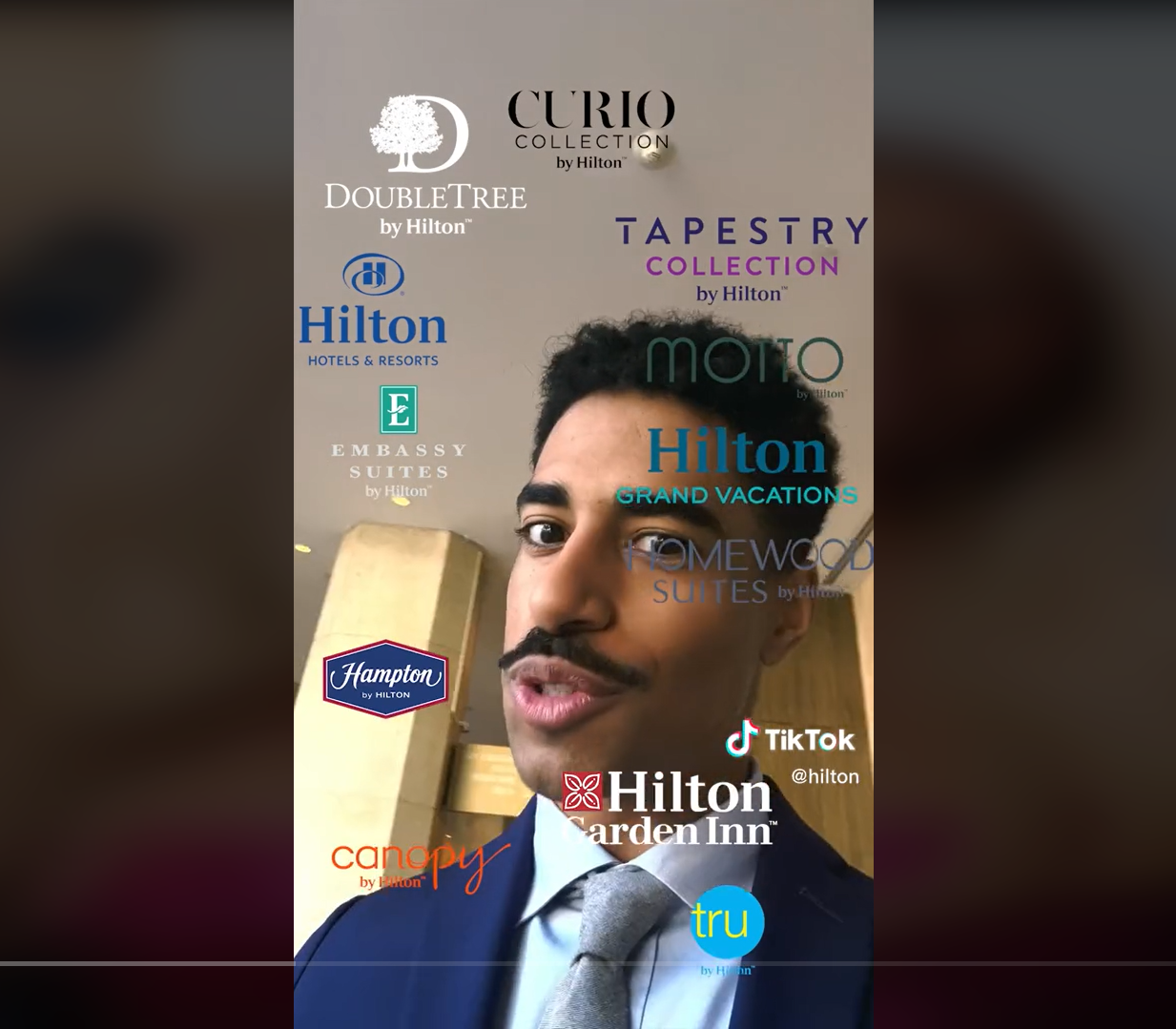

I’m sorry, but the Hilton TikTok ad sucks

Starting this blog I promised myself I’d use it to spread positivity and examples that inspire and motivate readers to do better and more. But there are some exceptions. 🙂 Hilton’s 10 minute TikTok ad has been all over media, filled with so much praise, that it actually makes me wonder, is it all paid…

-

A wonderfully different ad

-

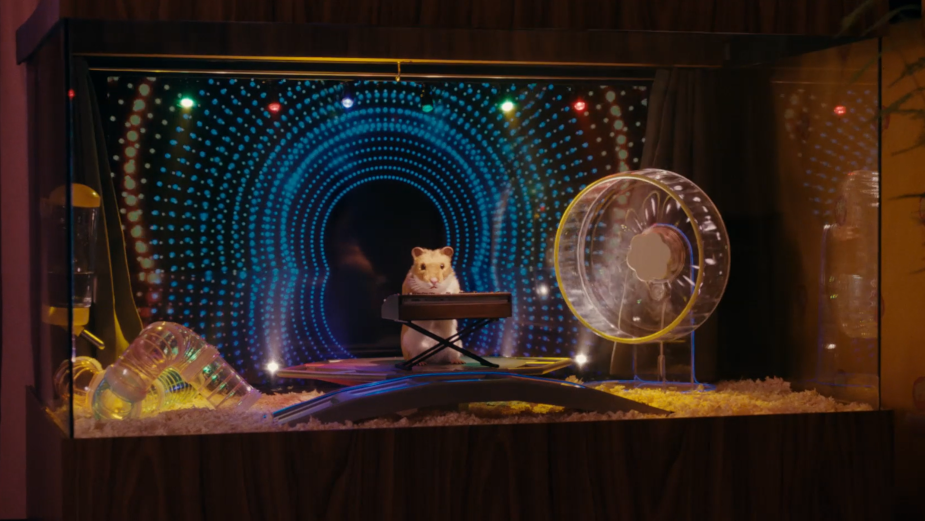

Super Bowl Favorites 2023

Although it’s a little late and probably everyone forgot about the Super Bowl, I just had to take my pick and continue the tradition of choosing the best Super Bowl ads. So aside from Rihanna’s awesome Fenty product placement, which definitely has no competition, these are my favorites from this years not-so -great Super Bowl…